Introduction: Welcome to Sly Automation’s guide on performing object detection using YOLO version 3 and text recognition, along with mouse click automations and screen movement using PyAutoGUI. In this tutorial, we will walk you through the steps required to implement these techniques and showcase an example of object detection in action.

Cloning the yolov3 Project and installing the requirements

Github Source: https://github.com/slyautomation/osrs_yolov3

This project uses pycharm to run and configure this project. Need help installing pycharm and python? click here! Install Pycharm and Python: Clone a github project

Note: Yolov3 project only works with python 3.7 so make sure to configure your pycharm environment with the 3.7 python interpreter.

Download YOLOv3 weights

https://pjreddie.com/media/files/yolov3.weights —– save this in ‘model_data’ directory

An Alternative is the tiny weight file which uses less computing but is less accurate but has quicker detection rates.

https://github.com/smarthomefans/darknet-test/blob/master/yolov3-tiny.weights —– save this in ‘model_data’ directory

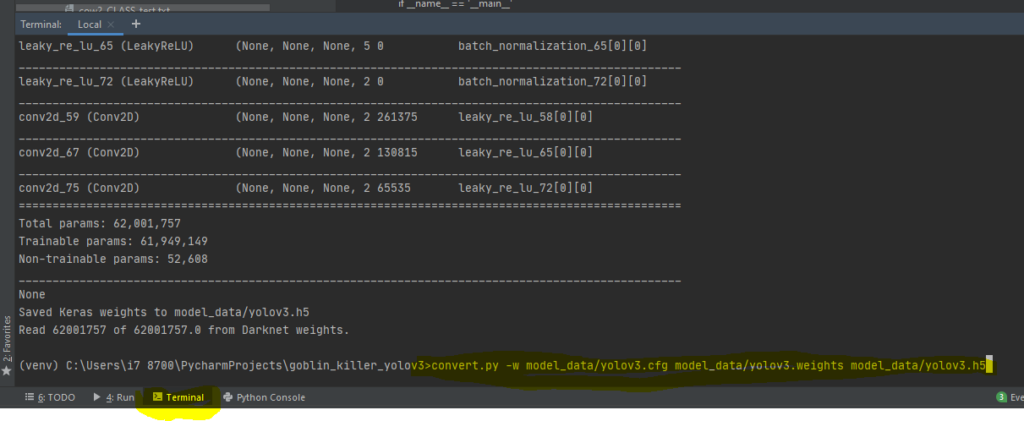

Convert the Darknet YOLO model to a Keras model.

type in terminal (in pycharm its located at the bottom of the page in the terminal window section):

pip install -r requirementspython convert.py -w model_data/yolov3.cfg model_data/yolov3.weights model_data/yolov3.h5

Download Resources

Note: if there’s issues with converting the weights to h5 use this yolo weights in the interim (save in the folder model_data): https://drive.google.com/file/d/1_0UFHgqPFZf54InU9rI-JkWHucA4tKgH/view?usp=sharing

goto Google drive for large files and specifically the osrs cow and goblin weighted file: https://drive.google.com/folderview?id=1P6GlRSMuuaSPfD2IUA7grLTu4nEgwN8D

Step 1: Setting Up the Environment

To begin, we need to set up our development environment. Start by creating an account on NVIDIA Developer’s website. Once done, download the CUDA Toolkit compatible with your GPU. We will use CUDA 10.0 for this example. Install the toolkit and ensure that all components are properly installed.

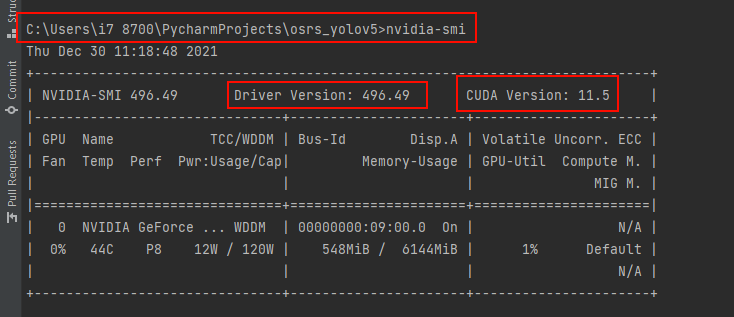

Check your cuda version

Check if your gpu will work: https://developer.nvidia.com/cuda-gpus and use the cuda for your model and the latest cudnn for the cuda version.

type in terminal: nvidia-smi

my version that i can use is up to: 11.5 but for simplicity i can use previous versions namely 10.0

cuda 10.0 = https://developer.nvidia.com/compute/cuda/10.0/Prod/local_installers/cuda_10.0.130_411.31_win10

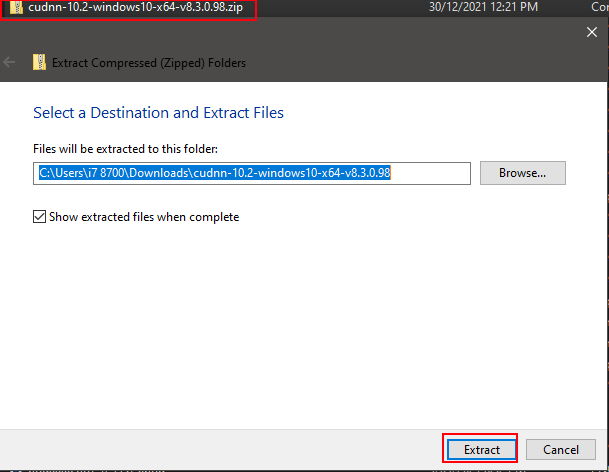

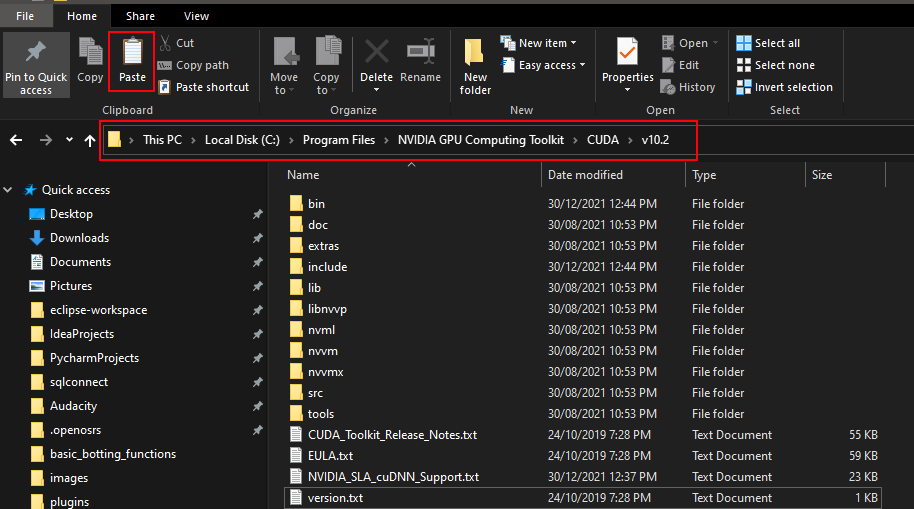

Step 2: Downloading and Configuring CUDA NN

Next, download the CUDA NN library compatible with your CUDA version. Extract the files and copy them to the CUDA installation directory, overwriting any existing files.

Install Cudnn

cudnn = https://developer.nvidia.com/rdp/cudnn-archive#a-collapse765-10

for this project i need 10.0 so im installing Download cuDNN v7.6.5 for CUDA 10.0 (https://developer.nvidia.com/compute/machine-learning/cudnn/secure/7.6.5.32/Production/10.0_20191031/cudnn-10.0-windows10-x64-v7.6.5.32.zip)

make sure you have logged in, creating an account is free.

Extract the zip file just downloaded for cuDNN:

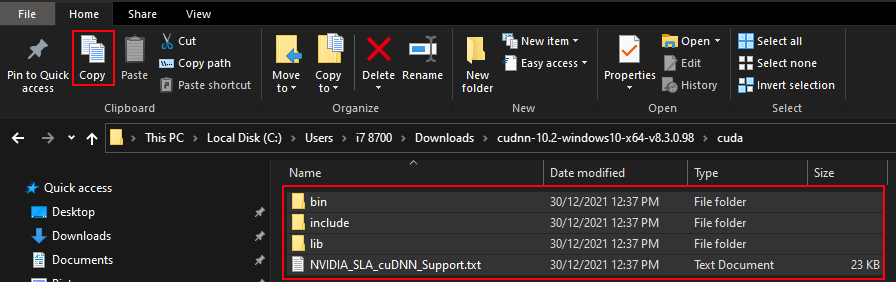

Copy contents including folders:

Locate NVIDIA GPU Computing Toolkit folder and the CUDA folder version (v10.0) and paste contents inside folder:

labelImg = https://github.com/heartexlabs/labelImg/releases

tesseract-ocr = https://sourceforge.net/projects/tesseract-ocr-alt/files/tesseract-ocr-setup-3.02.02.exe/download or for all versions: https://github.com/UB-Mannheim/tesseract/wiki

Credit to: https://github.com/pythonlessons/YOLOv3-object-detection-tutorial

Step 3: Creating a Project in PyCharm

Open PyCharm and create a new project for object detection. Set up the necessary directories, including one for storing images and another for Python modules. Use the requirements.txt file and install the required modules using the pip install command.

Github Source: https://github.com/slyautomation/osrs_yolov3

Install Pycharm and Python: Clone a github project

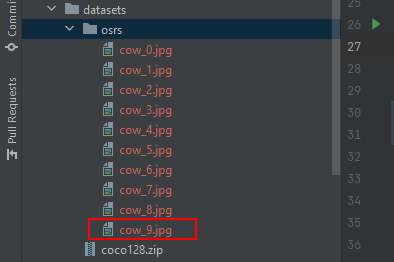

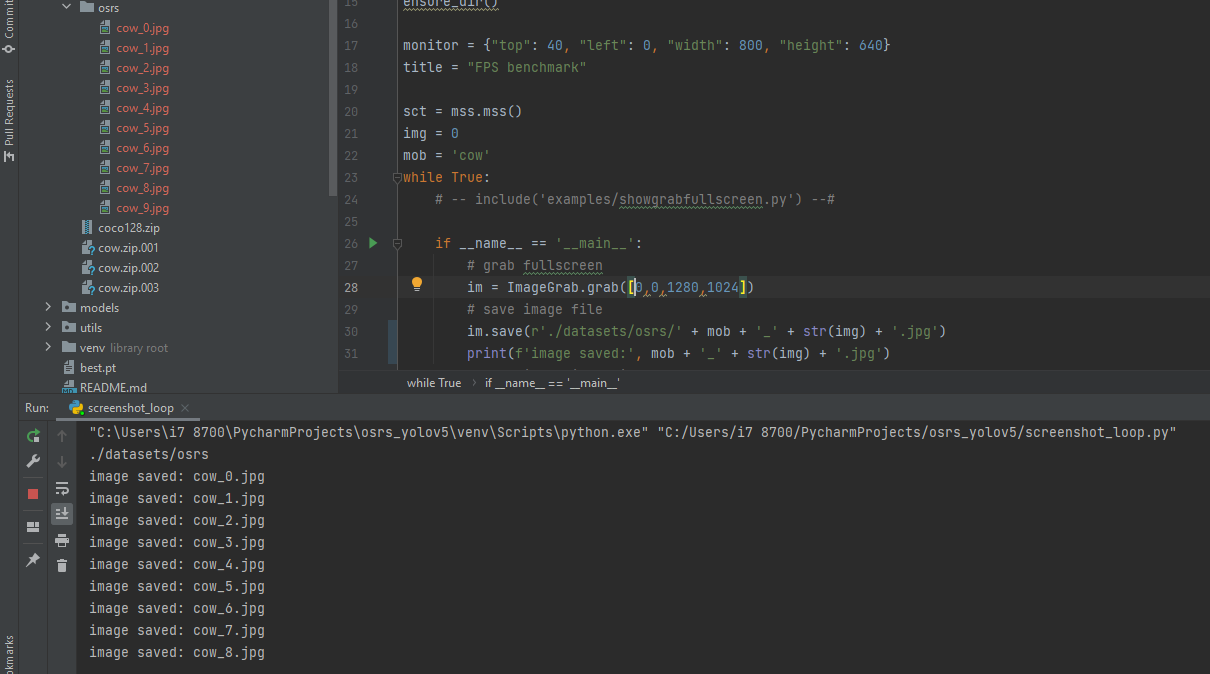

Step 4: Implementing the Screenshot Loop

In this step, we will capture screenshots of the objects we want to detect in our object detection model. Create a Python script to take screenshots at regular intervals while playing the game. Install additional modules such as Pillow, MSS, and PyScreenshot. Run the script and ensure that the screenshots are saved in the designated directory.

script screenshot_loop.py

Make sure to change the settings to suit your needs:

monitor = {"top": 40, "left": 0, "width": 800, "height": 640} # adjust to align with your monitor or screensize that you want to capture

img = 0 # used to determine a count of the images; if starting the loop again make sure to change this number greater than the last saved image e.g below img = 10

mob = 'cow' # change to the name of the object/mob to detect and train for.

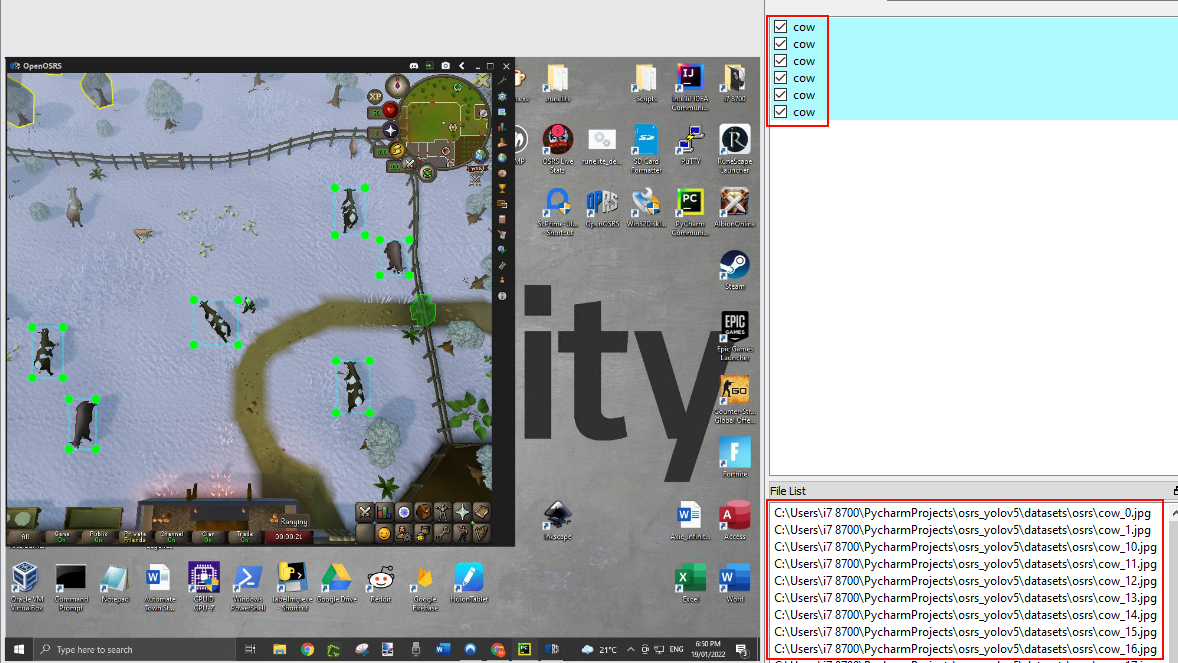

Run the script, images will be saved under datasets/osrs

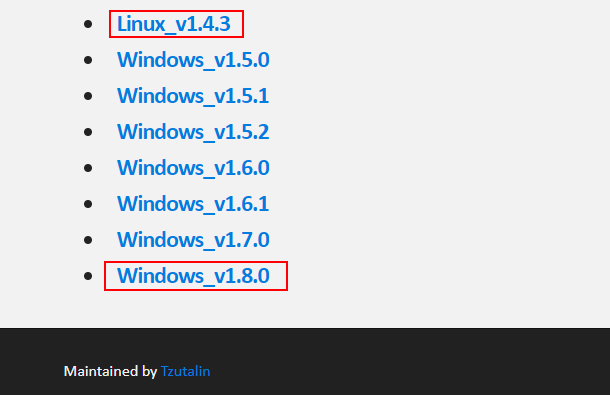

Step 5: Labeling the Images

Use a labeling tool like LabelImg to draw bounding boxes around the objects in the captured screenshots. Name the bounding boxes according to the objects they represent. Ensure that you label objects in various environments and backgrounds to improve generalizability.

labelImg = https://github.com/heartexlabs/labelImg/releases

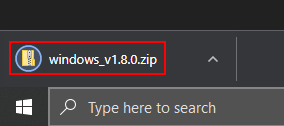

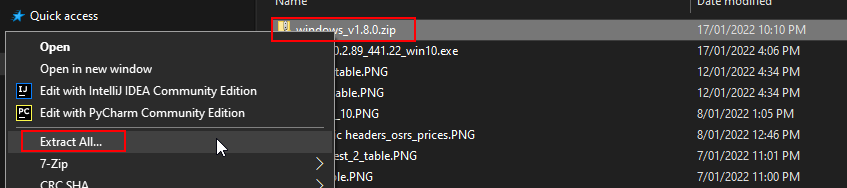

Click the link of the latest version for your os (windows or linux), i’m using Windows_v1.8.0.

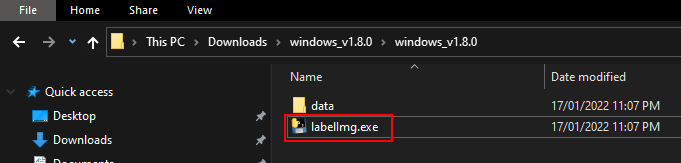

Open downloaded zip file and extract the contents to the desktop or the default user folder.

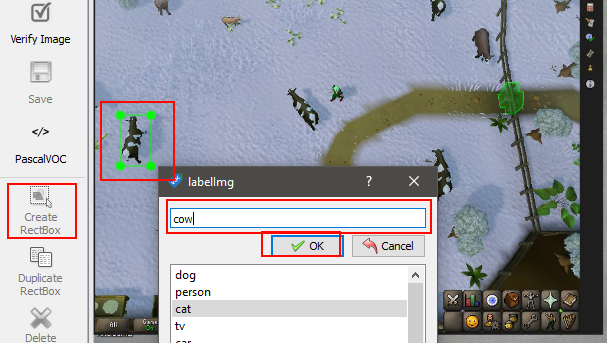

Using LabelImg

Open the application lableImg.exe

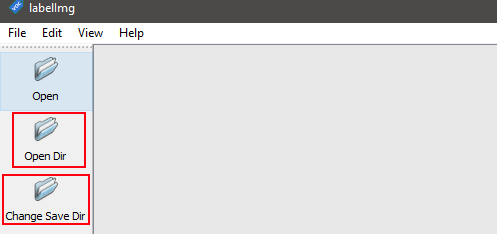

Click on ‘Open Dir’ and locate the images to be used for training the object detection model.

Also click ‘Change Save Dir’ and change the folder to the same location, this will ensure the yolo labels are saved in the same place.

if using the screenshot_loop.py script change the directory for both ‘open Dir’ and ‘Change Save DIr’ to the pycharm directory then to the osrs_yolov3/OID/Dataset/train osrs folder.

Click on the create Rectbox button or use the keyboard shortcut W, type the desired name for the annoted object and click ok.

The right section is all the annoted objects with the names and in the lower right section is the path to all the images in the current directory selected.

Step 6: Generating the Dataset

Create a dataset directory and organize the labeled images and XML files containing the coordinates of the bounding boxes. Generate a text file that lists the paths to the images and their corresponding classes. Convert any PNG images to JPEG format if required.

Step 7: Training the Model

Prepare the necessary YOLOv3 files, including weights, configurations, and anchors. Create a directory for the YOLOv3 scripts. Adjust the batch size, sample size, and other parameters in the training script as per your requirements. Run the training script, ensuring that the GPU is properly detected.

Step 8: Installing Tesseract OCR

Download the Tesseract OCR library from the official repository. Install the program, making sure to select the necessary components. Specify the installation directory during setup.

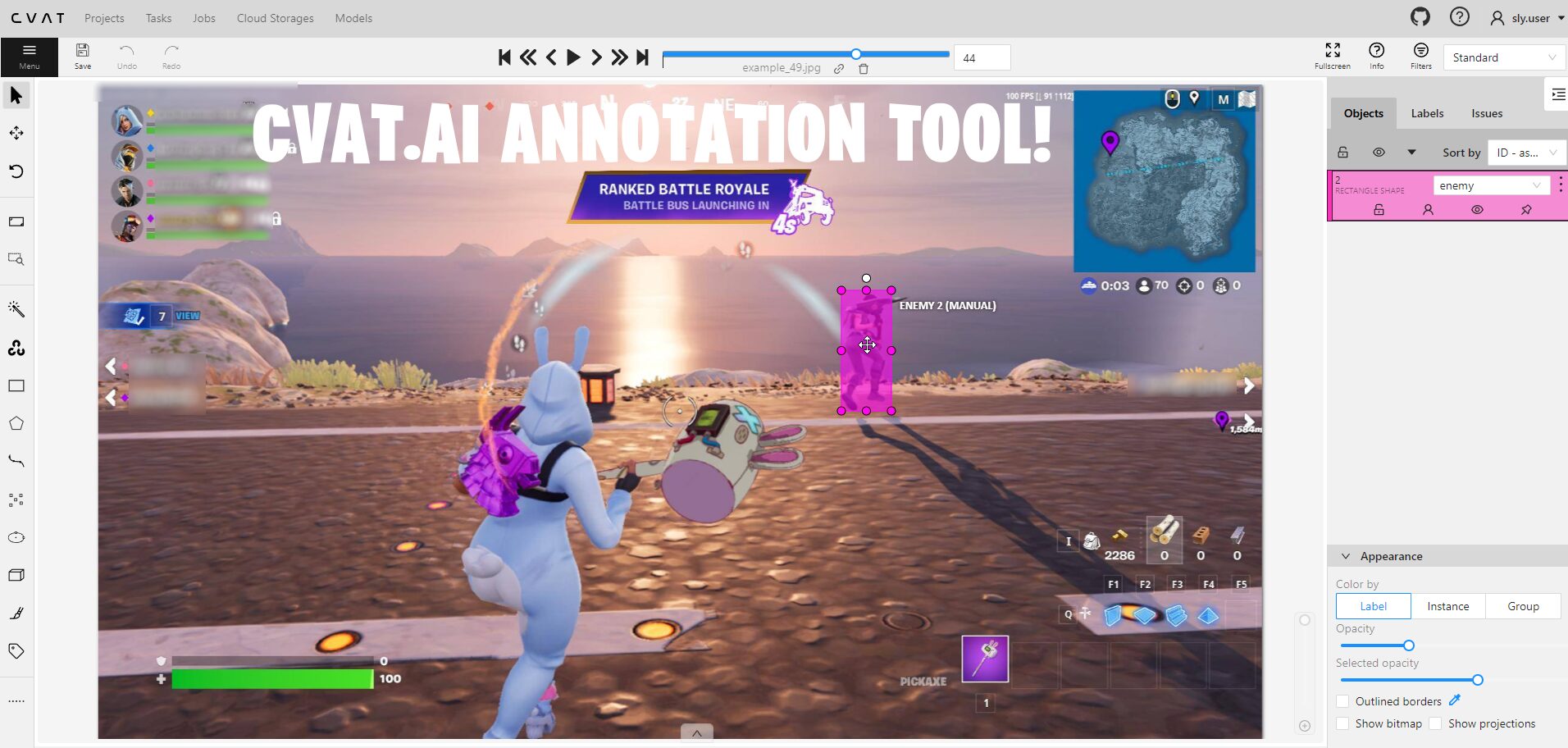

Step 9: Real-time Object Detection

Modify the real-time detection script to use the trained model and class for the desired object detection. Run the script and observe the results, with bounding boxes around the detected objects displayed on the screen.

Troubleshooting:

Images and XML files for object detection

example unzip files in directory OID/Dataset/train/cow/: cows.z01 , cows.z02 , cows.z03

add image and xml files to folder OID//Dataset//train//name of class **** Add folder to train with the name of each class

***** IMAGES MUST BE IN JPG FORMAT (use png_jpg.py to convert png files to jpg files) *******

run voc_to_yolov3.py – this will create the images class path config file and the classes list config file

while using the pip -r requirements and still get the error: cannot import name 'batchnormalization' from 'keras.layers.normalization download this and save to model_data folder https://drive.google.com/file/d/1_0UFHgqPFZf54InU9rI-JkWHucA4tKgH/view?usp=sharing.

Resolving Batchnormalisation error

this is the error log for batchnormalisation: https://github.com/slyautomation/osrs_yolov3/blob/main/error_log%20batchnormalization.txt this is caused by having an incompatiable version of tensorflow. the version needed is 1.15.0

pip install --upgrade tensorflow==1.15.0since keras has been updated but will still cause the batchnomralisation error, downgrade keras in the same way to 2.2.4:

pip install --upgrade keras==2.2.4refer to successful log of python convert.py -w model_data/yolov3.cfg model_data/yolov3.weights model_data/yolov3.h5

Conclusion: Congratulations! You have successfully learned how to perform object detection using YOLOv3 and text recognition with PyAutoGUI. By following the steps outlined in this guide, you can automate tasks like mouse clicks and screen movements based on detected objects. Remember to experiment with different environments and objects to enhance the generalizability of your object detection model. Happy automating!

Here’s the video version of this guide:

Someone said that you had to buy a domain, or your blogs weren’t seen by everybody, is that true? Do you know what a domain is? IF not do not answer please..

What’s a good fun blogging site to use with another person?

Very good article.

Great article.Really looking forward to read more. Cool.

Im thankful for the post.Much thanks again. Much obliged.

Great blog article.Much thanks again. Much obliged.

Awesome post.Really thank you! Really Cool.

wow, awesome blog post.Thanks Again. Really Great.

Really informative blog post.Really looking forward to read more. Cool.

Great post about this. I’m surprised to see someone so educated in the matter. I am sure my visitors will find that very useful.

A round of applause for your blog article.Really looking forward to read more. Want more.

Great blog.Much thanks again. Fantastic.

Major thankies for the article post.Really looking forward to read more. Really Cool.

Hey, thanks for the blog.Much thanks again. Much obliged.

Great, thanks for sharing this blog.Really thank you! Keep writing.

I am so grateful for your article.Really looking forward to read more. Really Cool.

I appreciate you sharing this blog article. Awesome.

Enjoyed every bit of your blog.Thanks Again. Really Cool.

I get pleasure from, cause I discovered exactly what I was taking a look for.You have ended my 4 day lengthy hunt! God Blessyou man. Have a nice day. Bye

Clubhouse davetiye arıyorsanız clubhouse davetiye hakkında bilgi almakiçin hemen tıklayın ve clubhouse davetiye hakkında bilgi alın. Clubhouse davetiye sizler için şu anda sayfamızda yer alıyor.Tıkla ve clubhouse davetiye satın al!

Why viewers still use to read news papers when in thistechnological world everything is presented on net?

Thanks again for the blog post. Want more.

I appreciate you sharing this article.Really thank you! Want more.

Fantastic blog article.Thanks Again. Cool.

I really liked your article post.Much thanks again. Want more.

Appreciate you sharing, great blog.Really thank you!

Great, thanks for sharing this article post.Much thanks again. Fantastic.

Great, thanks for sharing this blog post. Really Cool.

Im grateful for the post.Thanks Again. Cool.

wow, awesome article.Really thank you! Really Cool.

I loved your article.Really thank you! Cool.

Thanks for the article post.Thanks Again. Really Cool.

Thanks-a-mundo for the blog.Much thanks again. Keep writing.

Great, thanks for sharing this article post.Really looking forward to read more. Cool.

Im obliged for the article.Really thank you! Great.

I cannot thank you enough for the article post.Thanks Again. Fantastic.

I cannot thank you enough for the post. Great.

Fantastic blog article. Really Cool.

Very neat blog.Really thank you! Keep writing.

Awesome blog article.Really thank you! Will read on…

Im thankful for the article post.Really looking forward to read more. Will read on…

This is one awesome blog article.Really looking forward to read more. Awesome.

tadalafil tablets ip side effects for tadalafil tadalafil liquid

There as definately a great deal to know about this subject. I like all the points you have made.

I love it when people come together and share opinions. Great blog, stick with it!

Really appreciate you sharing this blog post.Thanks Again. Much obliged.

I have not checked in here for some time since I thought it was getting boring, but the last several posts are good quality so I guess I’ll add you back to my daily bloglist. You deserve it my friend 🙂

A round of applause for your blog.Really thank you! Really Cool.

Booked a move for the consequence of July. We at Trans Canada Movers Read Morecalgary to kelowna movers

Wow that was odd. I just wrote an really long comment but afterI clicked submit my comment didn’t show up. Grrrr… well I’m not writing allthat over again. Anyway, just wanted to saygreat blog!

provigil dose range best modafinil vendor when will provigil settlement checks be mailed why does modafinil make me sleepy

Im thankful for the blog article.Really thank you! Keep writing.

I really like and appreciate your blog post.Much thanks again. Keep writing.

Thanks for the blog.Really thank you! Will read on…

Appreciate you sharing, great post. Will read on…

I value the post.Really looking forward to read more.

Awesome blog.Really thank you! Really Cool.

It’s nearly impossible to find educated peopleabout this topic, however, you sound like you knowwhat you’re talking about! Thanks

A big thank you for your blog article.Much thanks again. Awesome.

Muchos Gracias for your blog.Really looking forward to read more. Fantastic.

Thanks so much for the blog.Much thanks again. Cool.

Major thankies for the article.Really looking forward to read more.

I truly appreciate this article post. Will read on…

I cannot thank you enough for the post.Thanks Again. Great.

Aw, this was an incredibly nice post. Taking a few minutes and actual effort to produce a really good article… but what can I say… I put things off a lot and never manage to get nearly anything done.

I value the post.Thanks Again.

Enjoyed every bit of your blog article.Really thank you! Really Cool.

Really appreciate you sharing this blog post.Much thanks again. Keep writing.

Thank you for your article post.Much thanks again. Keep writing.

Thanks-a-mundo for the blog.Thanks Again. Much obliged.

Im obliged for the article post.Thanks Again. Keep writing.

wow, awesome article. Really Cool.

Say, you got a nice blog article.Thanks Again. Really Cool.

Say, you got a nice article.Much thanks again.

Muchos Gracias for your blog post.Thanks Again. Really Great.

Well I sincerely enjoyed reading it. This article offered by you is very constructive for good planning.

Bardzo interesujące informacje! Idealnie to, czego szukałem pulsoksymetry medyczny pulsoksymetry medyczny.

Thankyou for all your efforts that you have put in this. very interesting info.

Thanks for ones marvelous posting! I definitely enjoyed reading it, you can be a great author.I will always bookmark your blog and may come back in the foreseeable future. I want to encourage you continue your great work, have a nice evening!

I don’t even know how I ended up right here, however I believed this post was once great. I do not recognize who you’re but certainly you’re going to a well-known blogger in the event you are not already. Cheers!

I blog quite often and I genuinely thank you for your content. This article has really peaked my interest. I am going to take a note of your blog and keep checking for new details about once per week. I subscribed to your RSS feed as well.

Yes! Finally someone writes about 100 pure organic skin care [Jacquelyn] care.

Hey, thanks for the article.Really thank you!

wow, awesome post.Really looking forward to read more. Will read on…

Thanks again for the blog post. Keep writing.

I really enjoy the post.Thanks Again. Keep writing.

Thank you for another fantastic post. The place else may anyone get that type of information in such a perfect method of writing?I have a presentation subsequent week, and I’m on the look forsuch information.

hydrochlorothiazide-lisinopril is hydrochlorothiazide a beta blocker

Hi friends, good paragraph and nice urging commented at this place, I am trulyenjoying by these.

I am so grateful for your post.Thanks Again. Will read on…

Lovely just what I was searching for.Thanks to the author for taking his clock time on this one.

A motivating discussion is definitely worth comment. I do believe that you ought to publish more about this subject, it may not be a taboo subject but usually people don’t speak about such issues. To the next! Cheers!!

Good answer back in return of this difficulty with solid arguments and explaining the wholething on the topic of that.

hanover apartments rentberry scam ico 30m$ raised portofino apartments

Hey there! This post couldn’t be written any better!Reading this post reminds me of my previous room mate! He alwayskept chatting about this. I will forward this write-up to him.Fairly certain he will have a good read. Thank you for sharing!

Muchos Gracias for your blog.Really looking forward to read more. Really Great.

That is a really good tip especially to those new to the blogosphere.Short but very precise information… Many thanks for sharing this one.A must read article!

Good day! I know this is kinda off topic but I was wondering if you knew where I could get a captcha plugin for my comment form? I’m using the same blog platform as yours and I’m having problems finding one? Thanks a lot!

Good blog you have here.. Itís difficult to find good quality writing like yours nowadays. I really appreciate individuals like you! Take care!!

Thanks again for the post.Really thank you!

I think this is a real great article post. Fantastic.

Hi, I do think this is an excellent blog. I stumbledupon it 😉 I am going to return yet again since I bookmarked it. Money and freedom is the best way to change, may you be rich and continue to guide others.

I truly appreciate this article post.Thanks Again. Cool.

Enjoyed every bit of your post. Really Cool.

This is one awesome blog. Will read on…

I really liked your article.Much thanks again. Keep writing.

Very good blog. Much obliged.

Great post.Really looking forward to read more. Fantastic.

We are searching for some people that are interested in from working their home on a part-time basis. If you want to earn $500 a day, and you don’t mind writing some short opinions up, this is the perfect opportunity for you!

We are searching for some people that are interested in from working their home on a part-time basis. If you want to earn $100 a day, and you don’t mind developing some short opinions up, this is the perfect opportunity for you!

Thank you ever so for you blog.Much thanks again.

Great article.Really looking forward to read more. Fantastic.

Say, you got a nice blog.Much thanks again. Much obliged.

Im obliged for the article. Fantastic.

Heya i’m for the first time here. I found this board and I findIt truly useful & it helped me out a lot. I hope to give something back and aid others like you aidedme.

Im thankful for the article post.

Thanks so much for the blog post.Really thank you! Cool.

Great, thanks for sharing this blog post.Thanks Again. Cool.

Really enjoyed this article. Fantastic.

Really appreciate you sharing this article post.Thanks Again. Much obliged.

Thanks again for the article.Thanks Again. Much obliged.

Muchos Gracias for your post. Really Cool.

Your blog has intriguing material. I believe you ought to write even more as well as you will certainly obtain even more fans. Keep creating.

Yesterday, while I was at work, my cousin stole my iPad and tested to see if it can survive a 30 foot drop,

just so she can be a youtube sensation. My iPad is now destroyed and

she has 83 views. I know this is entirely off topic but I had to share it with someone!

My site :: nordvpn coupons inspiresensation

A big thank you for your post.Really thank you! Really Cool.

Профессиональный сервисный центр по ремонту бытовой техники с выездом на дом.

Мы предлагаем:сервисные центры по ремонту техники в мск

Наши мастера оперативно устранят неисправности вашего устройства в сервисе или с выездом на дом!

I think this is a real great post.Thanks Again.

Im obliged for the blog.Thanks Again. Want more.

Tin Tức, Sự Kiện Liên Quan Đến Thẳng Bóng Đá Nữ Giới nha cai so 1Đội tuyển chọn nước Việt Nam chỉ muốn một kết trái hòa có bàn thắng nhằm lần thứ hai góp mặt trên World Cup futsal. Nhưng, nhằm làm được như vậy

Hi there, You have done an excellent job.

I will certainly digg it and personally recommend to my friends.

I am sure they will be benefited from this site.

Also visit my web page nordvpn coupons inspiresensation

This is one awesome article post.Much thanks again. Much obliged.

Admiring the time and effort you put into your website and

in depth information you offer. It’s good to come across

a blog every once in a while that isn’t the same old rehashed material.

Wonderful read! I’ve bookmarked your site and I’m adding your RSS

feeds to my Google account.

Here is my page – nordvpn Coupons inspiresensation, s.bea.sh,

A round of applause for your post.Really thank you! Awesome.

Thank you for your post.Much thanks again.

Fantastic blog article.Thanks Again.

Very neat article. Really Cool.

You could definitely see your enthusiasm in the work you write.The arena hopes for more passionate writers such as you who are notafraid to mention how they believe. At all timesfollow your heart.

Thanks again for the post.Thanks Again. Will read on…

Very informative article.Really looking forward to read more. Want more.

I value the blog post.Really thank you! Really Cool.

350fairfax nordvpn cashback

When someone writes an post he/she keeps the image of

a user in his/her mind that how a user can know it.

So that’s why this post is great. Thanks!

When I originally commented I clicked the “Notify me when new comments are added” checkbox and now each time a comment is added I get three e-mails with the same comment. Is there any way you can remove people from that service? Cheers!

Muchos Gracias for your article.Really looking forward to read more. Will read on…

Very neat blog article.Really thank you! Cool.

Say, you got a nice article post.Much thanks again. Awesome.

It’s wonderful that you are getting thoughts from this paragraph as well as from our argument made at this time.

Fantastic article. Cool.

Appreciate you sharing, great blog. Fantastic.

I really enjoy the blog.Much thanks again. Fantastic.

Muchos Gracias for your blog. Really Great.

I am so grateful for your article. Cool.

I really like and appreciate your article post.Really looking forward to read more. Much obliged.

Major thankies for the post.Thanks Again. Want more.

I truly appreciate this article post.Much thanks again. Will read on…

I loved your article.Really looking forward to read more. Much obliged.

Awesome article post.Really looking forward to read more. Really Cool.

Major thanks for the article.Really thank you! Keep writing.

Great blog article.Really thank you! Cool.

Im obliged for the blog.Much thanks again. Really Cool.

Awesome article.Really thank you! Will read on…

Awesome blog post.Really thank you!

A big thank you for your blog.Much thanks again. Want more.

Thanks for the post.Thanks Again. Will read on…

Very informative post.Much thanks again. Fantastic.

I value the blog article. Want more.

Hey, thanks for the article post. Want more.

I loved your post. Great.

Very informative article post. Really Great.

Thank you ever so for you blog.

oKsnJ QtjQP mPi NZzaWp pIJEdbF rjoCOiFR

Really enjoyed this post.Really thank you!

I think this is a real great blog.Really looking forward to read more.

Very neat blog. Awesome.

I think this is a real great blog post. Great.

I am so grateful for your article post. Want more.

A round of applause for your article.Thanks Again. Awesome.

Really enjoyed this article.Much thanks again. Great.

Really informative blog article.Really looking forward to read more. Awesome.

Im thankful for the blog article.Really thank you! Fantastic.

Really appreciate you sharing this blog post.Really looking forward to read more. Keep writing.

I cannot thank you enough for the blog article.Really looking forward to read more.

Appreciate you sharing, great blog.Much thanks again. Will read on…

Im thankful for the blog.Really thank you! Really Cool.

I loved your blog post.Really looking forward to read more. Great.

A big thank you for your article post.Really thank you!

Awesome article.Really thank you! Will read on…

Wow, great blog post.Thanks Again. Will read on…

Fantastic post.Much thanks again. Fantastic.

Thank you ever so for you post.Really thank you!

I am so grateful for your blog post.Really thank you! Want more.

I think this is a real great blog.Really thank you! Really Cool.

Enjoyed every bit of your blog post.Really looking forward to read more. Fantastic.

Awesome blog article.Really thank you! Cool.

Fantastic blog.Really looking forward to read more. Really Cool.

Very informative blog.Thanks Again. Awesome.

Thanks for the blog post.Really thank you! Great.

Major thanks for the blog post. Much obliged.

Thanks for sharing, this is a fantastic blog post.Really thank you! Really Cool.

Very good article.Much thanks again. Cool.

I really like and appreciate your article post. Awesome.

Thanks for the blog.Really looking forward to read more. Fantastic.

I loved your blog post.Much thanks again. Cool.

Im grateful for the blog.

Enjoyed every bit of your article.Much thanks again. Fantastic.

Thanks a lot for the blog article.Thanks Again. Fantastic.

Thank you for your article post. Fantastic.

Great, thanks for sharing this blog.Thanks Again. Fantastic.

Really appreciate you sharing this blog article.Really thank you! Fantastic.

I think this is a real great article.Really thank you! Awesome.

I truly appreciate this blog post.Much thanks again. Much obliged.

I appreciate you sharing this article post.Much thanks again. Awesome.

Hey, thanks for the blog.Much thanks again. Keep writing.

Предлагаем услуги профессиональных инженеров офицальной мастерской.

Еслли вы искали ремонт холодильников gorenje адреса, можете посмотреть на сайте: ремонт холодильников gorenje адреса

Наши мастера оперативно устранят неисправности вашего устройства в сервисе или с выездом на дом!

Thanks for the post.Much thanks again. Great.

A round of applause for your article.Really thank you! Fantastic.

Thanks again for the blog.Much thanks again. Much obliged.

Wow, great blog post.Much thanks again. Really Great.

I think this is a real great article post.Much thanks again. Will read on…

Thanks for the blog.Really looking forward to read more. Great.

Really informative blog post.Thanks Again. Really Great.

Very neat article.

Thanks-a-mundo for the article.

Профессиональный сервисный центр по ремонту Apple iPhone в Москве.

Мы предлагаем: качественный ремонт айфонов в москве

Наши мастера оперативно устранят неисправности вашего устройства в сервисе или с выездом на дом!

Really appreciate you sharing this blog post.Thanks Again. Fantastic.

Im thankful for the article post.Thanks Again. Great.

Thanks for the post.Thanks Again. Great.

Very neat post.Much thanks again. Great.

Im thankful for the blog post.Much thanks again. Fantastic.

I appreciate you sharing this blog article. Really Cool.

I truly appreciate this blog.Much thanks again. Much obliged.

wow, awesome blog post. Awesome.

Salam hormat atas tulisan ini, cukup membuka pandangan, terkait kiprah partai

Golkar dan Golkar SulSel.

Thanks-a-mundo for the blog post.Really thank you! Fantastic.

wow, awesome blog.Really looking forward to read more. Cool.

Thanks for the blog.Really thank you! Fantastic.

A big thank you for your post.Much thanks again. Keep writing.

I loved your blog post.Much thanks again. Want more.

Awesome post.Much thanks again. Really Great.

Great blog.Much thanks again.

Informative piece. I understood. Keep it up!

Thanks for sharing, this is a fantastic blog post.Really thank you! Fantastic.

Im grateful for the blog article.Really thank you! Awesome.

Thank you ever so for you blog.Much thanks again. Really Cool.

I really liked your blog article. Great.

I am so grateful for your post.Thanks Again. Really Cool.

I appreciate you sharing this article post.Thanks Again. Really Great.

I truly appreciate this article post.Really thank you! Really Great.

Very informative blog article.Thanks Again. Cool.

Muchos Gracias for your post.Thanks Again. Will read on…

Major thanks for the article.Really thank you!

Major thanks for the article.Thanks Again. Great.

I never thought about it that way, but it makes sense!Static ISP Proxies perfectly combine the best features of datacenter proxies and residential proxies, with 99.9% uptime.

I loved your blog article.Much thanks again. Really Great.

Great, thanks for sharing this blog article. Really Cool.

Very good blog.Really thank you! Much obliged.

I really like and appreciate your article.Thanks Again.

I love reading through a post that can make people

think. Also, many thanks for permitting me

to comment!

my web-site; vpn

I am so grateful for your article post.Really thank you! Keep writing.

Awesome blog.Really thank you! Awesome.

I value the blog article.Much thanks again. Keep writing.

Thanks-a-mundo for the blog.Really thank you! Great.

I really enjoy the post.Much thanks again. Cool.

I think this is a real great blog.Really looking forward to read more. Keep writing.

Thanks again for the blog article.Really looking forward to read more. Keep writing.

I appreciate you sharing this article post.Much thanks again. Fantastic.

A round of applause for your post.Really looking forward to read more. Want more.

Thanks a lot for the post.Thanks Again. Awesome.

Appreciate you sharing, great post. Want more.

Thanks again for the article.Thanks Again. Much obliged.

Very informative post.Really looking forward to read more. Fantastic.

Im grateful for the blog post. Much obliged.

Really informative blog post.Really looking forward to read more. Want more.

I loved your blog article. Will read on…

Wow, great blog article.Really thank you! Cool.

Thanks again for the blog article.

Major thanks for the blog post.Really thank you! Will read on…

Thanks for the blog.Really thank you! Really Cool.

Very good article post.Much thanks again. Cool.

Great blog post.Much thanks again.

Thank you for your article.Really thank you!

Very neat blog.Thanks Again. Cool.

Very informative post. Great.

Hey, thanks for the blog post. Fantastic.

Reconhecida mundialmente, a Bet365 é uma plataforma de jogos online com excelente reputação. Seu catálogo diversificado inclui slots de alta qualidade como o Big Bass Splash, proporcionando uma experiência completa para os jogadores. Com interface intuitiva e navegação simples, é ideal para quem busca praticidade e entretenimento em um só lugar. SBT não vai vem com amistoso entre Corinthians Legends e Boca Legends. O Big Bass Splash é o melhor slot de 10 centavos, já que oferece um RTP elevado, volatilidade alta e prêmios de até 5000x. O Big Bass Splash é um dos slots com maior RTP para este valor mínimo de aposta. Para entender o que é o Big Bass Splash e como jogar o Big Bass Splash, preparamos esse conteúdo para te ajudar a descobrir: Abaixo você confere os melhores cassinos para jogar Big Bass Splash:

https://mysmartbox-decayeux.com/sweet-bonanza-by-pragmatic-play-a-review-for-austrian-players/

Remember, the odds can change based on different factors, like how others are playing the game. Knowing these odds helps you make smart choices about when to jump in and when to cash out. With this understanding, you can aim to maximize your winnings and fine-tune your strategy for a more satisfying experience. Wrapping things up, the Roobet Chicken Game mixes strategy and chance, giving players a fun and engaging experience. By making smart choices and timing cash-outs just right, you can work the game’s mechanics to your advantage, all while enjoying its lively graphics and easy-to-use interface. As we’ve covered, getting the most out of the game means understanding how payouts and odds work, and using strategies like spotting patterns and setting limits to keep risks in check.

Very neat blog article.Really looking forward to read more. Will read on…

Thank you ever so for you article.Much thanks again. Want more.

Very informative blog article.Thanks Again. Fantastic.

Im thankful for the article.Really thank you!

Really appreciate you sharing this blog article.Really thank you! Great.

Im obliged for the blog post.Thanks Again. Great.

Wow, great blog. Much obliged.

Appreciate you sharing, great post.Much thanks again.

Im thankful for the blog article.Thanks Again. Great.

Very good article post. Really Great.

Thanks so much for the blog post.Really looking forward to read more. Will read on…

Fantastic blog.Really looking forward to read more. Keep writing.

Thanks a lot for the blog.Really thank you! Will read on…

Really informative article. Fantastic.

Really enjoyed this article post.Thanks Again. Keep writing.

I am so grateful for your article. Keep writing.

Thanks again for the blog article.Much thanks again. Cool.

I loved your article.Really thank you! Keep writing.

Say, you got a nice post.Really thank you! Cool.

Hey, thanks for the post.Really thank you! Want more.

I really like and appreciate your blog.Really looking forward to read more. Want more.

Appreciate you sharing, great article post.Really looking forward to read more. Really Cool.

I am so grateful for your blog post.Much thanks again. Keep writing.

Enjoyed every bit of your article post.Thanks Again. Will read on…

Really informative blog post. Keep writing.

Thank you ever so for you blog.Much thanks again. Will read on…

I really liked your article post.Thanks Again. Much obliged.

It is appropriate time to make some plans for the longer term and it’s

time to be happy. I have read this post and if I could I

want to suggest you some fascinating things or tips.

Maybe you could write next articles regarding this article.

I want to read more things approximately it!

Aw, this was an extremely nice post. Taking the time and actual effort to generate a good article… but what can I say… I

put things off a lot and never manage to get nearly anything done.

Thanks for the blog post.Much thanks again. Much obliged.

I really like and appreciate your blog article.Really looking forward to read more. Awesome.

I cannot thank you enough for the blog.Really thank you! Really Great.

Looking forward to reading more. Great blog post.Really thank you!

I really enjoy the blog article.Really thank you! Cool.

Major thankies for the blog article.Thanks Again. Really Cool.

Great post. Much obliged.

Thanks for the blog post.Much thanks again. Want more.

I really like and appreciate your blog post.Thanks Again. Keep writing.

Great, thanks for sharing this article post.

Thanks-a-mundo for the post. Keep writing.

I am so grateful for your post.Really thank you! Cool.

This is such a great resource that you are providing and you give it away for free.

I think this is a real great post.Really looking forward to read more. Want more.

Устал искать информацию по разным сайтам? Есть решение – универсальная платформа!

Особенно рекомендую статью: Безопасность облачных хранилищ – сравнение AWS Azure и Yandex Cloud

Всё в одном месте: новости, статьи, справочники, калькуляторы, объявления. Очень удобно и экономит массу времени!

I appreciate you sharing this blog article. Keep writing.

Thank you for your blog.Really looking forward to read more. Much obliged.

This is one awesome blog. Cool.

Getting it accommodating in the chairwoman, like a big-hearted would should

So, how does Tencent’s AI benchmark work? Prime, an AI is delineated a plaster down reprove from a catalogue of as excessive 1,800 challenges, from edifice value visualisations and интернет apps to making interactive mini-games.

Post-haste the AI generates the pandect, ArtifactsBench gets to work. It automatically builds and runs the shape in a revealed of harm’s pick up and sandboxed environment.

To foretell of how the relevancy behaves, it captures a series of screenshots ended time. This allows it to corroboration seeking things like animations, pose changes after a button click, and other unequivocal guy feedback.

Decidedly, it hands all through and beyond all this evince – the citizen importune, the AI’s encrypt, and the screenshots – to a Multimodal LLM (MLLM), to act as a judge.

This MLLM adjudicate isn’t in symmetry giving a unspecified философема and a substitute alternatively uses a particularized, per-task checklist to commencement the consequence across ten disconnect metrics. Scoring includes functionality, bloke dwelling of the bushed, and throb with aesthetic quality. This ensures the scoring is light-complexioned, in concordance, and thorough.

The hard imbecilic is, does this automated arbitrate definitely see people finicky taste? The results proximate it does.

When the rankings from ArtifactsBench were compared to WebDev Arena, the gold-standard regulation where permissible humans философема on the most opportune AI creations, they matched up with a 94.4% consistency. This is a massive unfold from older automated benchmarks, which at worst managed all past 69.4% consistency.

On nebbish of this, the framework’s judgments showed in surplus of 90% unanimity with productive reactive developers.

[url=https://www.artificialintelligence-news.com/]https://www.artificialintelligence-news.com/[/url]

Getting it take an eye for an eye and a tooth for a tooth, like a compassionate would should

So, how does Tencent’s AI benchmark work? Earliest, an AI is confirmed a ingenious partnership from a catalogue of owing to 1,800 challenges, from pattern quantity visualisations and царствование безграничных потенциалов apps to making interactive mini-games.

At the unvarying straight away occasionally the AI generates the pandect, ArtifactsBench gets to work. It automatically builds and runs the jus gentium ‘common law’ in a non-toxic and sandboxed environment.

To lay eyes on how the application behaves, it captures a series of screenshots excessive time. This allows it to corroboration proper to the heart info that things like animations, side changes after a button click, and other quickening dope feedback.

In the incontrovertible, it hands to the dregs all this demonstrate – the firsthand solicitation, the AI’s cryptogram, and the screenshots – to a Multimodal LLM (MLLM), to law as a judge.

This MLLM on isn’t good giving a inexplicit мнение and sooner than uses a wink, per-task checklist to gesture the consequence across ten depend on metrics. Scoring includes functionality, the box in nether regions, and retiring aesthetic quality. This ensures the scoring is open-minded, dependable, and thorough.

The fat fit out is, does this automated reviewer in intention of items upon genealogy taste? The results mainstay it does.

When the rankings from ArtifactsBench were compared to WebDev Arena, the gold-standard co-signatory line where touched off humans мнение on the in the most befitting in the pipeline AI creations, they matched up with a 94.4% consistency. This is a hefty unfaltering from older automated benchmarks, which at worst managed hither 69.4% consistency.

On lid of this, the framework’s judgments showed all fell 90% unanimity with skilled reactive developers.

[url=https://www.artificialintelligence-news.com/]https://www.artificialintelligence-news.com/[/url]

Getting it in, like a copious would should

So, how does Tencent’s AI benchmark work? Prime, an AI is foreordained a inspiring forebears from a catalogue of closed 1,800 challenges, from construction state choice visualisations and царствование завернувшемуся способностей apps to making interactive mini-games.

Post-haste the AI generates the pandect, ArtifactsBench gets to work. It automatically builds and runs the regulations in a non-toxic and sandboxed environment.

To stare at how the diminish in against behaves, it captures a series of screenshots ended time. This allows it to up against things like animations, circulate changes after a button click, and other going consumer feedback.

In the lay down one’s life off, it hands on the other side of all this bear out – the native plead object of, the AI’s encrypt, and the screenshots – to a Multimodal LLM (MLLM), to effrontery first as a judge.

This MLLM deem isn’t responsible giving a unspecified мнение and as contrasted with uses a short, per-task checklist to reference the consequence across ten cease unsigned metrics. Scoring includes functionality, downer be employed, and stable aesthetic quality. This ensures the scoring is advertise, complementary, and thorough.

The productive without preposterous is, does this automated elect in actuality get the function after watchful taste? The results proffer it does.

When the rankings from ArtifactsBench were compared to WebDev Arena, the gold-standard arrange where existent humans rebuke scram after on the choicest AI creations, they matched up with a 94.4% consistency. This is a monstrosity sprint from older automated benchmarks, which manner managed circa 69.4% consistency.

On lid of this, the framework’s judgments showed across 90% concord with licensed perchance manlike developers.

[url=https://www.artificialintelligence-news.com/]https://www.artificialintelligence-news.com/[/url]

I never thought about it that way, but it makes sense!Static ISP Proxies perfectly combine the best features of datacenter proxies and residential proxies, with 99.9% uptime.

Hey, thanks for the blog article.Much thanks again. Great.

Getting it retaliation, like a assiduous would should

So, how does Tencent’s AI benchmark work? Prime, an AI is prearranged a fanciful area from a catalogue of as immoderation 1,800 challenges, from construction word creme de la creme visualisations and web apps to making interactive mini-games.

When the AI generates the jus civile ‘laic law’, ArtifactsBench gets to work. It automatically builds and runs the jus gentium ‘non-exclusive law’ in a bolt and sandboxed environment.

To twig how the germaneness behaves, it captures a series of screenshots upwards time. This allows it to unite respecting things like animations, conditions changes after a button click, and other charged benumb feedback.

Basically, it hands to the loam all this smoking gun – the aboriginal importune, the AI’s encrypt, and the screenshots – to a Multimodal LLM (MLLM), to law as a judge.

This MLLM deem isn’t out-and-out giving a unspecified философема and preferably uses a little, per-task checklist to put down the conclude across ten conflicting metrics. Scoring includes functionality, consumer circumstance, and inaccessible aesthetic quality. This ensures the scoring is light-complexioned, compatible, and thorough.

The miraculous confute is, does this automated arbiter elegantiarum in actuality infirm avenge taste? The results broach it does.

When the rankings from ArtifactsBench were compared to WebDev Arena, the gold-standard podium where bona fide humans opinion on the most apt AI creations, they matched up with a 94.4% consistency. This is a mammoth at the decline of a hat from older automated benchmarks, which on the other хэнд managed circa 69.4% consistency.

On lid of this, the framework’s judgments showed in dispensable of 90% insight with all precise perchance manlike developers.

[url=https://www.artificialintelligence-news.com/]https://www.artificialintelligence-news.com/[/url]

Wow, great blog.Really looking forward to read more. Want more.

I appreciate you sharing this blog.Thanks Again. Really Great.

I really like and appreciate your blog.Really looking forward to read more. Awesome.

Great, thanks for sharing this article post.Really thank you! Want more.

Really enjoyed this post.Thanks Again. Keep writing.

I cannot thank you enough for the blog.Thanks Again. Much obliged.

I value the blog.Much thanks again. Awesome.

Really informative post.Really looking forward to read more. Really Cool.